Dimensionality Reduction in Data Mining Application: A Systematic Review

Main Article Content

Abstract

The rapid growth of high-dimensional data introduces redundancy and noise that hinder data mining and machine learning, a challenge commonly known as the curse of dimensionality. Dimensionality Reduction (DR) techniques, including feature selection and feature extraction, alleviate these issues by reducing computational complexity while maintaining informative structures and enhancing predictive accuracy. This review systematically examines classical DR methods such as Principal Component Analysis (PCA) and its variants, alongside recent advances in nonlinear and manifold learning approaches, including Isomap and Locally Linear Embedding (LLE). Emerging probabilistic, soft computing, automated pipeline, and semi-supervised DR techniques are also discussed. Applications across bioinformatics, recommender systems, trajectory mining, and disease diagnosis are reviewed, with comparative analyses highlighting the strengths and limitations of each approach.

Article Details

This work is licensed under a Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International License.

Licensed under a CC-BY license: https://creativecommons.org/licenses/by-nc-sa/4.0/

How to Cite

References

R. R. Zebari, A. M. Abdulazeez, D. Q. Zeebaree, D. A. Zebari, and J. N. Saeed, “A comprehensive review of dimensionality reduction techniques for feature selection and feature extraction,” Journal of Applied Science and Technology Trends, pp. 56–70, 2020.

P. Ray, S. S. Reddy, and T. Banerjee, “Various dimension reduction techniques for high dimensional data analysis: A review,” 2021.

C. O. S. Sorzano, J. Vargas, and A. Pascual-Montano, “A survey of dimensionality reduction techniques,” arXiv preprint, arXiv:1403.2877, 2014.

D. A. Zebari et al., “A comprehensive review of dimensionality reduction techniques for efficient computation,” Journal of Applied Science and Technology Trends, vol. 1, no. 2, pp. 56–70, 2020.

H. Xie, J. Li, and H. Xue, “A survey of dimensionality reduction techniques based on random projection,” arXiv preprint, arXiv:1706.04371, 2017.

W. Jia, M. Sun, J. Lian, and S. Hou, “Feature dimensionality reduction: A review,” Complex & Intelligent Systems, vol. 8, pp. 2663–2693, 2022.

W. Jia, M. Sun, J. Lian, and S. Hou, “Feature dimensionality reduction: A review,” Complex & Intelligent Systems, vol. 8, pp. 2663–2693, 2022.

I. Guyon and A. Elisseeff, “An introduction to variable and feature selection,” Journal of Machine Learning Research, vol. 3, pp. 1150–1183, 2003.

L. van der Maaten, E. Postma, and H. J. van der Herik, “Dimensionality reduction: A comparative review,” Journal of Machine Learning Research, vol. 8, pp. 2663–2693, 2007.

S. T. Roweis and L. K. Saul, “Nonlinear dimensionality reduction by locally linear embedding,” Science, vol. 290, no. 5500, pp. 2323–2326, 2000.

G. T. Reddy et al., “Analysis of dimensionality reduction techniques on big data,” IEEE Access, pp. 1–1, 2020.

M. Espadoto et al., “Towards a quantitative survey of dimension reduction techniques,” IEEE Transactions on Visualization and Computer Graphics, 2019.

A. M. Alhassan and W. M. N. W. Zainon, “Review of feature selection, dimensionality reduction and classification for chronic disease diagnosis,” in Proc. IEEE, 2021.

B. M. S. Hasan and A. M. Abdulazeez, “A review of principal component analysis algorithm for dimensionality reduction,” Journal of Soft Computing and Data Mining, vol. 2, no. 1, pp. 20–30, 2021.

G. T. Reddy et al., “Analysis of dimensionality reduction techniques on big data,” IEEE Access, vol. 8, 2020.

V. Svetnik, “Comprehensive review of dimensionality reduction algorithms: challenges, solutions, and future directions,” PeerJ Computer Science, 2025.

B. M. Sarwar, G. Karypis, J. A. Konstan, and J. T. Riedl, “Application of dimensionality reduction in recommender system – A case study,” Univ. of Minnesota, 2000.

S. Ayesha, M. K. Hanif, and R. Talib, “Overview and comparative study of dimensionality reduction techniques for high dimensional data,” Information Fusion, vol. 59, pp. 44–58, 2020.

Senzhang Wang , Jiannong Cas, Philip S.Yu,”Deep learning for Spatio-temporal Data Mining:A Survey”,IEEE Transactions on Knowledg,Vol 34 Issue 8.

T. Hastie, R. Tibshirani, and J. H. Friedman, The Elements of Statistical Learning: Data Mining, Inference, and Prediction, 2nd ed. Springer, 2009.

M. Espadoto et al., “Toward a quantitative survey of dimension reduction techniques,” IEEE Transactions on Visualization and Computer Graphics, vol. 27, no. 3, pp. 2153–2173, Mar. 2021.

S. Marukatat, “Tutorial on PCA and approximate kernel PCA,” Artificial Intelligence Review, vol. 56, no. 6, pp. 5445–5477, 2023.

I. T. Jolliffe, Principal Component Analysis, 2nd ed., Springer, 2002.

H. Abdi and L. J. Williams, “Principal component analysis,” WIREs Computational Statistics, vol. 2, no. 4, pp. 433–459, 2010.

I. Jolliffe and J. Cadima, “Principal component analysis: A review and recent developments,” Phil. Trans. R. Soc. A, vol. 379, 2021.

S. Wold, K. Esbensen, and P. Geladi, “Principal component analysis,” Chemometrics and Intelligent Laboratory Systems, vol. 2, pp. 37–52, 1987.

J. Shlens, “A tutorial on principal component analysis,” arXiv:1404.1100, 2014.

T. Hastie, R. Tibshirani, and J. Friedman, The Elements of Statistical Learning, 2nd ed., Springer, 2009.

A. Sharma and K. Paliwal, “Fast principal component analysis using fixed-point algorithm,” Pattern Recognition Letters, vol. 28, pp. 1151–1155, 2007.

B. Schölkopf, A. Smola, and K. R. Müller, “Nonlinear component analysis as a kernel eigenvalue problem,” Neural Computation, vol. 10, no. 5, pp. 1299–1319, 1998.

D. J. Hand, “Classifier technology and the illusion of progress,” Statistical Science, vol. 21, pp. 1–14, 2006.

A. Hyvärinen and E. Oja, “Independent component analysis: Algorithms and applications,” Neural Networks, vol. 13, pp. 411–430, 2000.

D. L. Donoho and C. Grimes, “Hessian eigenmaps...,” PNAS, vol. 100, pp. 5591–5596, 2003.

L. van der Maaten and G. Hinton, “Visualizing data using t-SNE,” Journal of Machine Learning Research, vol. 9, pp. 2579–2605, 2008.

D. Theng and K. K. Bhoyar, “Feature selection techniques for machine learning: A survey...,” Knowledge and Information Systems, vol. 66, pp. 1575–1637, 2024.

A. Radhika and M. S. Masood, “Effective dimensionality reduction by using soft computing method in data mining techniques,” Springer-Verlag, 2021.

S. Londhe and M. Patil, “Dimensional reduction techniques for huge volume of data,” International Journal for Research in Applied Science and Engineering Technology (IJRASET), vol. 10, no. 3, pp. 125–138, 2022.

N. Sharma and K. Saroha, “Study of dimension reduction methodologies in data mining,” in Proc. ICCCA, pp. 133–136, 2015.

R. R. Zebari et al., “A comprehensive review of dimensionality reduction techniques for feature selection and feature extraction,” Journal of Applied Science and Technology Trends, vol. 1, no. 2, pp. 56–70, 2020.

L. Qu and Y. Pei, “A comprehensive review on discriminant analysis for addressing challenges of class-level limitations, small sample size, and robustness,” Processes, vol. 12, no. 7, p. 1382, 2024.

M. Belkin and P. Niyogi, “Laplacian eigenmaps for dimensionality reduction and data representation,” Neural Computation, vol. 15, no. 6, pp. 1373–1396, 2003.

R. R. Coifman and S. Lafon, “Diffusion maps,” Applied and Computational Harmonic Analysis, vol. 21, no. 1, pp. 5–30, 2006.

H. Liao et al., “A survey of deep learning technologies for intrusion detection in Internet of Things,” IEEE Access, 2024.

S. Velliangiri, S. Alagumuthukrishnan, and S. I. T. Joseph, “A review of dimensionality reduction techniques for efficient computation,” Procedia Computer Science, vol. 165, pp. 104–111, 2019.

K. Thangavel and A. Pethalakshmi, “Dimensionality reduction based on rough set theory: A review,” Applied Soft Computing, vol. 9, pp. 1–12, 2009.

H. E. Abdelkader, A. G. Gad, A. A. Abohany, and S. E. Sorour, “An efficient data mining technique for assessing satisfaction level with online learning...,” IEEE Access, vol. 10, 2022.

R. Houari, A. Bounceur, M.-T. Kechadi, A.-K. Tari, and R. Euler, “Dimensionality reduction in data mining: A Copula approach,” 2016.

M. M. Ahsan et al., “Effect of data scaling methods on machine learning algorithms and model performance,” Technologies, vol. 9, no. 3, p. 52, 2021.

M. Ali, R. Borgo, and M. W. Jones, “Concurrent time-series selections using deep learning and dimension reduction,” Knowledge-Based Systems, vol. 233, p. 107507, 2021.

I. Jolliffe and J. Cadima, “Principal component analysis...,” Phil. Trans. R. Soc. A, vol. 379, no. 2191, 2021.

Y. Bengio, A. Courville, and P. Vincent, “Representation learning: A review and new perspectives,” IEEE TPAMI, vol. 35, no. 8, pp. 1798–1828, 2013.

V. N. Vapnik, The Nature of Statistical Learning Theory, Springer, 1995.

H. Garcia-Laencina, J.-L. Sancho-Gómez, and A. R. Figueiras-Vidal, “Pattern classification with missing data: A review,” Neural Computing and Applications, vol. 19, no. 2, pp. 263–282, 2010.

A. Jović, K. Brkić, and N. Bogunović, “A review of feature selection methods with applications,” in Proc. MIPRO, 2015, pp. 1200–1205.

V. Borisov et al., “Deep neural networks and tabular data: A survey,” IEEE Transactions on Neural Networks and Learning Systems, vol. 35, no. 6, pp. 1–21, 2022.

D. A. Freedman, Statistical Models: Theory and Practice, Cambridge Univ. Press, 2009.

M. M. Ahsan et al., “Effect of data scaling methods on machine learning algorithms and model performance,” Technologies, vol. 9, no. 3, p. 52, 2021.

S. Raschka and V. Mirjalili, Python Machine Learning, 3rd ed., Packt, 2019.

E. Bonabeau, “Agent-based modeling: Methods and techniques...,” PNAS, vol. 99, pp. 7280–7287, 2002.

C. M. Macal and M. J. North, “Tutorial on agent-based modeling...,” Journal of Simulation, vol. 4, no. 3, pp. 151–162, 2010.

G. Chandrashekar and F. Sahin, “A survey on feature selection methods,” Computers & Electrical Engineering, vol. 40, no. 1, pp. 16–28, 2014.

R. Pugliese, S. Regondi, and R. Marini, “Machine learning-based approach: Global trends, research directions, and regulatory standpoints,” Data Science and Management, vol. 4, pp. 19–29, 2021.

S. Ayesha, M. K. Hanif, and R. Talib, “Overview and comparative study of dimensionality reduction techniques for high dimensional data,” Information Fusion, vol. 59, pp. 44–58, 2020.

F. Ros and R. Riad, Feature and Dimensionality Reduction for Clustering with Deep Learning. 2024.

S. Velliangiri, S. Alagumuthukrishnan, and S. I. Thankumar Joseph, “A review of dimensionality reduction techniques for efficient computation,” Procedia Computer Science, vol. 165, pp. 104–111, 2019.

G. James, D. Witten, T. Hastie, and R. Tibshirani, An Introduction to Statistical Learning. Springer, 2013.

A. K. Saxena, R. Pandey, and N. K. Singh, “Dimension reduction techniques for optimization,” in Proc. Int. Conf. Computing, Sciences and Communications (ICCSC), IEEE, 2024.

C. K. J. Hou and K. Behdinan, “Dimensionality reduction in surrogate modeling: A review,” Data Science and Engineering, vol. 7, pp. 402–427, 2022.

W. Jia, M. Sun, J. Lian, and S. Hou, “Feature dimensionality reduction: A review,” Complex & Intelligent Systems, vol. 8, no. 3, pp. 2663–2693, 2022.

D. Patel, A. Saxena, and J. Wang, “A machine learning-based wrapper method for feature selection,” International Journal of Data Warehousing and Mining, vol. 20, no. 1, Jan. 2024.

S. T. Roweis and L. K. Saul, “Nonlinear dimensionality reduction by locally linear embedding,” Science, vol. 290, no. 5500, pp. 2323–2326, 2000.

R. J. Urbanowicz et al., “Relief-based feature selection: Introduction and review,” Journal of Biomedical Informatics, vol. 85, pp. 189–203, 2018.

H. Peng, F. Long, and C. Ding, “Feature selection based on mutual information criteria of max-relevance and min-redundancy,” IEEE Transactions on Pattern Analysis and Machine Intelligence, vol. 27, no. 8, pp. 1226–1238, Aug. 2005.

D. Sharma, P. Gaur, and A. P. Mittal, “Comparative analysis of hybrid GAPSO optimization technique with GA and PSO methods for cost optimization of an off-grid hybrid energy system,” Energy Technology & Policy, vol. 1, no. 1, 2014.

M. Qurashi, T. Ma, and E. Chaniotakis, “PC–SPSA: Employing dimensionality reduction to limit SPSA search noise in DTA model calibration,” IEEE Transactions on Intelligent Transportation Systems, vol. 21, no. 4, 2020.

A. O. Salau and S. Jain, “Feature extraction: A survey of the types, techniques, applications,” in Proc. Int. Conf. Signal Processing and Communication (ICSC), 2019.

D. P. Kingma and M. Welling, “Auto-encoding variational Bayes,” arXiv:1312.6114, 2013.

P. Vincent, H. Larochelle, Y. Bengio, and P.-A. Manzagol, “Extracting and composing robust features with denoising autoencoders,” in Proc. ICML, pp. 1096–1103, 2008.

S. Lespinats, B. Colnge, and D. Dutykh, Nonlinear Dimensionality Reduction Techniques: A Data Structure Preservation Approach. 2022.

L. McInnes, J. Healy, and J. Melville, “UMAP: Uniform manifold approximation and projection for dimension reduction,” arXiv:1802.03426, 2018.

L. Viale, A. P. Daga, A. Fasana, and L. Garibaldi, “Dimensionality reduction methods of a clustered dataset for the diagnosis of a SCADA-equipped complex machine,” Machines, vol. 11, no. 1, p. 36, 2023.

S. Kumari, V. Panchal, and G. Kaur, “Methods for dimension reduction,” International Journal for Technological Research in Engineering, vol. 9, no. 9, May 2022.

A. Mumuni and F. Mumuni, “Automated data processing and feature engineering for deep learning and big data applications: A survey,” Journal of Information and Intelligence, 2024.

G. T. Reddy et al., “Analysis of dimensionality reduction techniques on big data,” IEEE Access, vol. 8, 2020.

R. Rani et al., “Big data dimensionality reduction techniques in IoT: Review and taxonomy,” Cluster Computing, vol. 25, no. 8, pp. 1–23, 2022.

D. Zhang, Z.-H. Zhou, and S. Chen, “Semi-supervised dimensionality reduction,” 2020.

B. A. Kitchenham and S. Charters, Guidelines for Performing Systematic Literature Reviews in Software Engineering, EBSE-2007-01, 2007.

M. Brereton, B. A. Kitchenham, D. Budgen, M. Turner, and P. Khalil, “Lessons from applying the systematic literature review process within the software engineering domain,” Journal of Systems and Software, vol. 80, no. 4, pp. 571–583, 2007.

H. Liu and H. Motoda, Feature Selection for Knowledge Discovery and Data Mining. Springer, 1998.

D. Moher et al., “Preferred reporting items for systematic reviews and meta-analyses: the PRISMA statement,” PLoS Medicine, vol. 6, no. 7, p. e1000097, 2009.

P. Petticrew and H. Roberts, Systematic Reviews in the Social Sciences: A Practical Guide. Wiley-Blackwell, 2006.

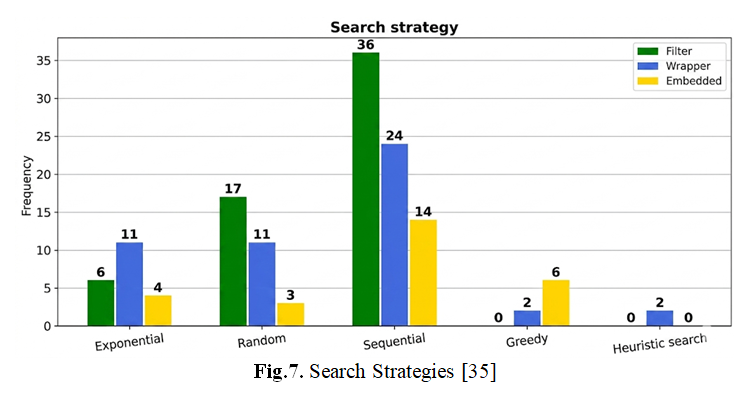

J. Shlens, “A tutorial on principal component analysis,” arXiv preprint, arXiv:1404.1100, 2014.

J. A. Lee and M. Verleysen, Nonlinear Dimensionality Reduction. Springer, 2007.

M. Dash and H. Liu, “Feature selection for classification,” Intelligent Data Analysis, vol. 1, no. 1–4, pp. 131–156, 1997.